Semantic SEO: A Comprehensive Guide to Boosting Traffic and Sales

Welcome to the world of Semantic SEO! In this article, we will delve into the fascinating realm of semantic search optimization, a strategy that is revolutionizing the way we approach SEO. This article is based on the Pawel Sokolowski presentation from SEOCON 2023 about “SemanticSEO” and aims to provide a comprehensive understanding of Semantic SEO and its effectiveness in boosting traffic and sales.

Understanding Semantic SEO

Semantic SEO is a strategy that aims to optimize content for the meaning and intent of user queries, rather than just matching keywords. It’s about understanding the nuances of language and ensuring that your content aligns with the user’s intent. For search engines, Semantic SEO helps understand the context and relevance of your content, and rank it accordingly. The ultimate goal of Semantic SEO is to facilitate efficient communication between humans and machines, specifically search AI.

Effectiveness of Semantic SEO

Is Semantic SEO effective? The answer is a resounding YES! Semantic SEO has proven to be incredibly effective in improving the visibility and ranking of web content. It has been successfully implemented by various organizations, and in case studies we present how WebWave, Nexford University, and Decathlon, create content that Google adores.

Natural Language Processing and Semantic SEO

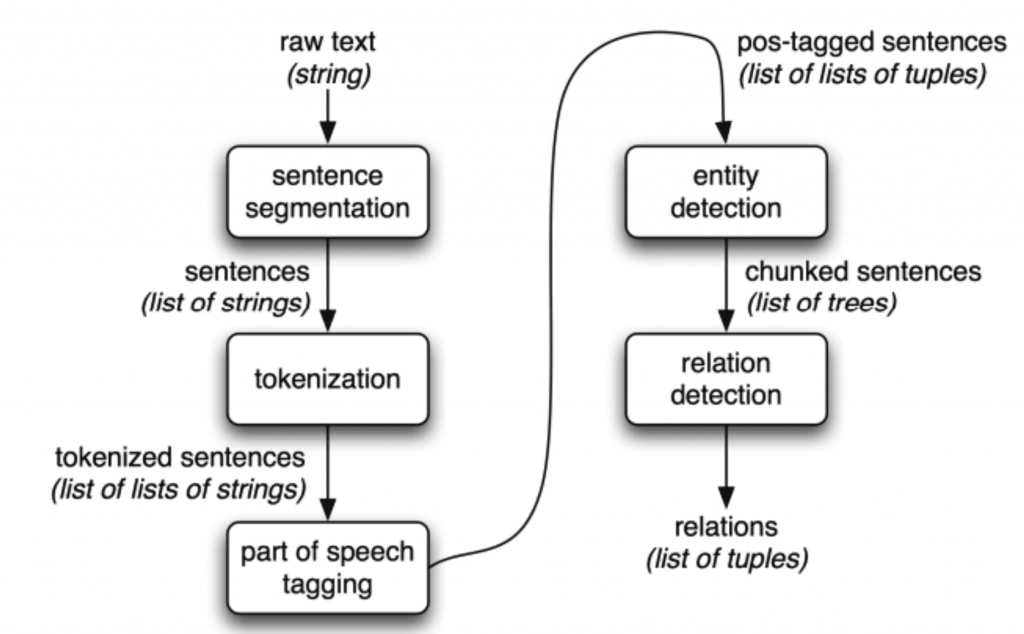

Natural Language Processing (NLP) is a critical component of Semantic SEO. It’s a field of artificial intelligence that focuses on the interaction between computers and humans through language. NLP enables machines to understand, interpret, and generate human language in a valuable and meaningful way. It’s the driving force behind various applications, including translation apps, voice-enabled TV remotes, and of course, search engines.

The Role of NLP in Semantic SEO

NLP plays a pivotal role in Semantic SEO. It allows search engines to understand the content on the web, interpret user queries, and provide relevant search results. NLP combines knowledge about languages and language mechanics, which includes parameters and structure, to understand and interpret the nuances of human language.

Semantic SEO: A Comprehensive Guide to Boosting Traffic and Sales

NLP in Search: Understanding the Process

The application of NLP in search engines involves several steps:

1. Understanding and Scoring Document Quality

Natural Language Processing (NLP) is instrumental in helping search engines understand the content of a document. It goes beyond merely reading the text; it interprets the meaning behind the words. For instance, NLP can identify whether the term “apple” refers to the fruit or the tech company based on the context in which it’s used.

NLP also assesses the quality of the document. It evaluates factors such as the coherence of the text, the accuracy of the information, and the relevancy of the content to a specific topic or query. High-quality content is more likely to be ranked higher in search results, making this a crucial step in the process.

2. Source Scoring

The credibility of the source of a document is another important factor that NLP takes into account. This could be the domain or the author of the content. For example, an article written by a recognized expert in a field and published on a reputable website is likely to be considered more credible than an article written by an unknown author on an obscure website.

NLP helps in evaluating the credibility of the source by analyzing factors such as the author’s expertise, the website’s domain authority, and the reliability of the information provided in the document. A credible source can significantly enhance the ranking of the content in search results.

Source scoring, also known as domain or site authority, is a concept in SEO that refers to the “trustworthiness” or credibility of a website. Google, while not explicitly confirming the use of a “domain authority” metric, has indicated that the trustworthiness of a site is a factor in its ranking algorithm.

Google determines the credibility of a website based on several factors:

– User Experience and Site Structure

User experience and site structure are integral to source scoring. Google’s algorithms favor sites that are well-structured, easy to navigate, and provide a positive user experience. This includes factors like site speed, mobile-friendliness, and the overall usability of the site.

Site speed is a critical factor as users typically abandon sites that take too long to load. Google recognizes this and prioritizes sites that load quickly in its search results. Similarly, with the increasing prevalence of mobile browsing, having a mobile-friendly site is no longer optional. Google’s Mobile-First Indexing means the search engine predominantly uses the mobile version of the content for indexing and ranking.

The overall navigability of a site also contributes to a better user experience. Sites should be structured logically and intuitively, making it easy for users to find the information they’re looking for. A well-structured site also makes it easier for search engine crawlers to understand and index the site’s content.

– Quality of Content

Finally, the quality of the content is paramount in source scoring. Websites that consistently produce high-quality, original content are seen as more trustworthy. High-quality content is content that is accurate, well-written, and provides value to the reader. It should be original, not duplicated from other sites, and it should provide unique insights or information.

Google’s algorithms are designed to understand and appreciate good content. They can assess factors like the depth of the content, the accuracy of the information, and even the writing style. Sites that regularly publish high-quality content are likely to score higher in terms of source credibility.

– Backlink Profile

The backlink profile of a site plays a significant role in its source scoring. Backlinks are links from one website to a page on another website. Google and other major search engines consider backlinks “votes” for a specific page. Pages with a high number of backlinks tend to have high organic search engine rankings.

However, not all backlinks are valued the same. Links from relevant, well-respected websites are more valuable than links from low-quality sites. Google’s algorithms are sophisticated and can identify and penalize sites that try to game the system by building low-quality or spammy backlinks.

In conclusion, source scoring is a multifaceted process that takes into account numerous factors. By optimizing these factors, you can improve your site’s credibility, enhance its visibility on search engines, and ultimately drive more traffic to your site.

Domain Authority (DA) is a metric developed by MOZ, a leading SEO software company. It predicts how well a website will rank on search engine result pages (SERPs). DA is calculated by evaluating multiple factors, including the total number of links and linking root domains, into a single DA score. This score can then be used to compare websites or track the “ranking strength” of a website over time.

It’s important to note that while Domain Authority may provide some insight into how a website’s search engine rankings may perform, it is not a metric used by Google in determining search rankings and does not influence the SERPs directly.

In conclusion, while we don’t have specific details from Google patents, it’s widely accepted in the SEO community that the credibility of a website, its content, and its backlink profile play a significant role in determining its ranking in search results.

3. Understanding User Query

Interpreting user queries is one of the most critical roles of NLP in search engines. It’s not just about understanding the words used in the query; it’s about understanding the intent behind those words. For instance, if a user searches for “how to bake a cake,” the intent is likely to find a recipe or a step-by-step guide on baking a cake.

NLP uses various techniques, such as semantic analysis and sentiment analysis, to understand the user’s intent. It then uses this understanding to provide results that best match the user’s intent.

4. Aligning Results to User Intent

Once the user’s intent is understood, NLP helps in aligning the search results to this intent. This involves matching the user’s query with the most relevant documents. For example, if the user’s intent is to find a cake recipe, NLP will match this intent with documents that provide cake recipes.

NLP ensures that the most relevant and useful results are displayed to the user. This not only improves the user’s search experience but also increases the likelihood of the user finding the information they’re looking for.

5. Scoring SERP Results

Scoring the Search Engine Results Page (SERP) results is another crucial role of NLP. It helps in determining the order in which the search results should be displayed. This involves evaluating each document’s relevancy to the user’s query and the quality of the content.

NLP uses various algorithms to score the SERP results. These algorithms take into account factors such as the document’s relevancy to the query, the quality of the content, and the credibility of the source. The documents with the highest scores are displayed at the top of the search results.

6. Modification of Document Scores

Based on the user’s interaction with the search results, NLP helps in modifying the scores of the documents. For instance, if a user clicks on a search result and spends a significant amount of time on the page, it’s likely that the document was relevant and useful to the user. This could lead to an increase in the document’s score.

On the other hand, if a user quickly returns to the search results after clicking on a document, it could indicate that the document was not relevant or useful. This could lead to a decrease in the document’s score. By modifying document scores based on user behavior, NLP ensures that the most relevant and useful content is ranked higher in future searches.

Conversely, if a user quickly returns to the SERP after clicking on a search result, this could indicate that the document was not relevant or useful. This could lead to adecrease in the document’s score. This feedback loop allows search engines to continually refine and improve the relevance of their search results.

This process of modifying document scores is crucial in ensuring that the most relevant and useful content is ranked higher in future searches. It’s a dynamic process that adapts to user behavior and feedback, ensuring that the search engine continually improves its understanding of what users find valuable and relevant.

In conclusion, NLP plays a pivotal role in Semantic SEO, from understanding and scoring document quality to modifying document scores based on user interaction. By leveraging NLP, Semantic SEO can provide more accurate, relevant, and personalized search results, leading to a better user experience and increased website traffic and sales.

NLP and Semantic SEO: The Perfect Synergy

NLP and Semantic SEO work together to improve the search experience for users. While NLP helps machines understand human language, Semantic SEO ensures that this understanding is used to optimize content for search engines. This synergy between NLP and Semantic SEO allows for more efficient communication between humans and machines, leading to more relevant search results and a better user experience.

How Search Engines Process Queries

Search engines process information in three stages: before the query, during the query, and after the query.

Before the Query: The Foundations of Search

Before a query is made, search engines perform document analysis and matching, which involves understanding documents, analyzing their quality, and assessing the authority of the source. This process includes URL discovery, crawling, indexation, and document processing. Search engines also use knowledge graphs to understand the expertise of a document. These graphs classify information into categories like facts, places, people, documents, products, music, etc., with relations between elements.

– URL Discovery

The first step in the process is URL discovery. Search engines need to know what pages exist on the web before they can analyze and rank them. They discover new URLs in several ways:

1. Sitemaps: Webmasters can provide sitemaps to search engines, which are essentially lists of the pages on a website that should be crawled and indexed.

2. Links: Search engines also discover new URLs by following links from known pages to new ones. This is why having a good internal linking structure and earning backlinks from other websites is crucial for SEO.

3. Direct Submission: Webmasters can also directly submit pages to be crawled using search engine tools like Google’s URL Inspection Tool.

– Crawling

Once search engines know about a URL, they need to visit, or “crawl,” the page to understand its content. Search engine bots, often called spiders or crawlers, visit these pages and follow links on those pages to discover more content.

– Indexation

After a page is crawled, it’s then indexed, which means it’s processed and added to the search engine’s database. During indexation, the search engine analyzes the content, images, and videos on the page, along with meta-data like title tags and meta descriptions.

– Document Analysis and Matching

Document analysis and matching is a crucial part of the “before the query” process. This involves understanding the content of a document, analyzing its quality, and assessing the authority of the source.

As mentioned, search engines use complex algorithms and technologies like Natural Language Processing (NLP) to analyze and understand the content. They look at factors like the quality of the content, the relevance to specific topics, and the credibility of the source.

The quality of the content is assessed based on factors like the depth and breadth of the information, the accuracy of the facts, and the overall coherence and readability of the text. The relevance of the content to specific topics is determined by analyzing the keywords and concepts in the text and understanding the overall context and meaning of the content.

The authority of the source is evaluated based on factors like the reputation of the domain, the credibility of the author, and the number and quality of other sites that link to the page. A high-quality, relevant document from a credible source is likely to be ranked higher in search results.

– Understanding Through Knowledge Graphs

Knowledge graphs play a crucial role in understanding the content of a document. A knowledge graph is a database that stores millions of pieces of information about the world and the relationships between them.

Search engines use knowledge graphs to understand the context and relevance of a document. For example, if a document mentions Paris, the search engine can use its knowledge graph to understand that Paris is a city in France, that France is a country in Europe, and that Europe is a continent on Earth. This understanding allows the search engine to assess the relevance of the document to different queries.

Knowledge graphs classify information into categories like facts, places, people, documents, products, music, etc., with relations between elements. This classification helps search engines understand the expertise of a document and match it with relevant queries.

In conclusion, the “before the query” process involves a complex interplay of document analysis, source evaluation, and knowledge graph understanding. By optimizing your content for this process, you can improve its visibility and ranking in search results.

During the Query

During the query, search engines work on defining the best answers. They understand the user and match documents to display the most relevant answers. This involves understanding synonyms, relations, variants, anchors, and history. The search engine pairs the query with the document and calculates an intent boost factor and a document boost factor to determine the best documents to display.

– Query Understanding and Interpretation

The first step in the “during the query” process is understanding and interpreting the user’s query. This involves not just understanding the words used in the query, but also the intent behind those words. For instance, if a user searches for “apple,” they could be looking for information about the fruit, or they could be looking for information about Apple Inc., the technology company. Understanding this intent is crucial for providing relevant search results.

Search engines use Natural Language Processing (NLP) techniques to understand and interpret user queries. These techniques involve understanding the semantics (meaning) and syntax (structure) of the query. For instance, the query “What is the weather like in New York?” has a clear intent (to find out the current weather in New York) and a clear structure (it is a question).

– Query Expansion

Once the search engine understands the user’s query, it can then expand the query to include related terms and concepts. This process, known as query expansion, helps to improve the accuracy and relevance of the search results.

For instance, if a user searches for “running shoes,” the search engine might expand the query to include related terms like “sneakers,” “athletic footwear,” and “trainers.” This ensures that the search results include all relevant documents, even if they use different terminology.

Query expansion can also involve correcting spelling mistakes, recognizing synonyms, and understanding homonyms (words that are spelled the same but have different meanings).

– Document Matching

The next step in the “during the query” process is document matching. This involves matching the user’s query to relevant documents in the search engine’s index.

Document matching involves comparing the words and concepts in the query to the words and concepts in each document. The goal is to find the documents that are most relevant to the user’s query.

Search engines use various algorithms to match queries to documents. These algorithms take into account factors like the frequency and location of keywords in the document, the overall relevance of the document to the query, and the quality and authority of the document.

– Ranking and Personalization

The final step in the “during the query” process is ranking the results. This involves determining the order in which the search results are displayed.

Search engines use complex ranking algorithms to rank the results. These algorithms take into account factors like the relevance of the document to the query, the quality of the content, the authority of the source, and the user’s personal preferences and search history.

Personalization is a key aspect of this process. Search engines use information about the user, such as their location, search history, and preferences, to personalize the search results. For example, a user in New York who searches for “pizza” will see different results than a user in Chicago who performs the same search.

In conclusion, the “during the query” process involves understanding and interpreting the user’s query, expanding the query, matching the query to relevant documents, and ranking the results. By understanding this process, you can optimize your content to better match user intent and achieve higher rankings in search results.

After the Query

After the query, search engines analyze behavioral factors like click-through rates (CTR), dwell time, click patterns, time on search, and time on page. They also conduct A/B testing and modify document scoring based on the results.

Creating Content that Google Adores

To create content that Google adores, it’s important to understand and implement Semantic SEO strategies. Semantic SEO can significantly boost your website’s traffic and sales. By understanding and implementing the principles of Semantic SEO, you can create content that both users and search engines will love. If you have any questions or need further assistance, feel free to reach out to us at webchat for more information.